The ability to “update” data is often not the top priority when designing analytical databases.

First, the source of truth for the data is often somewhere else (like a Transactional Database); that's where the brunt of the UPDATEs take place. And secondly, what you are looking for when analysing is for the ability to query large quantities of data in multiple ways.

That is why these databases most of the time optimise for fast recovery (SELECTs) and fast ingestion (INSERTs).

But there are always scenarios where you need to update or replace your analytical data: you might have reconciliation processes over your transactions that affect your original data. Or maybe simply your ingestion process was faulty and ingested inaccurate data for a period of time.

Updating that data can very often be cumbersome, slow and resource intensive, often due to how the data is partitioned or replicated for performance reasons. This is especially true when you are dealing with replicated tables over multiple servers, and even harder if you have materialized views over those tables, since these will be partitioned or replicated too; that means that not only the original data needs to be updated, but that those views will also have to be regenerated.

So when the inevitable time to update the data comes, it can easily turn into a nightmare: replacing entire partitions is possible, but you require intimate knowledge of how the data is organised, partitioned or replicated. Also, you might want to replace only part of those partitions or just the data that satisfies certain conditions.

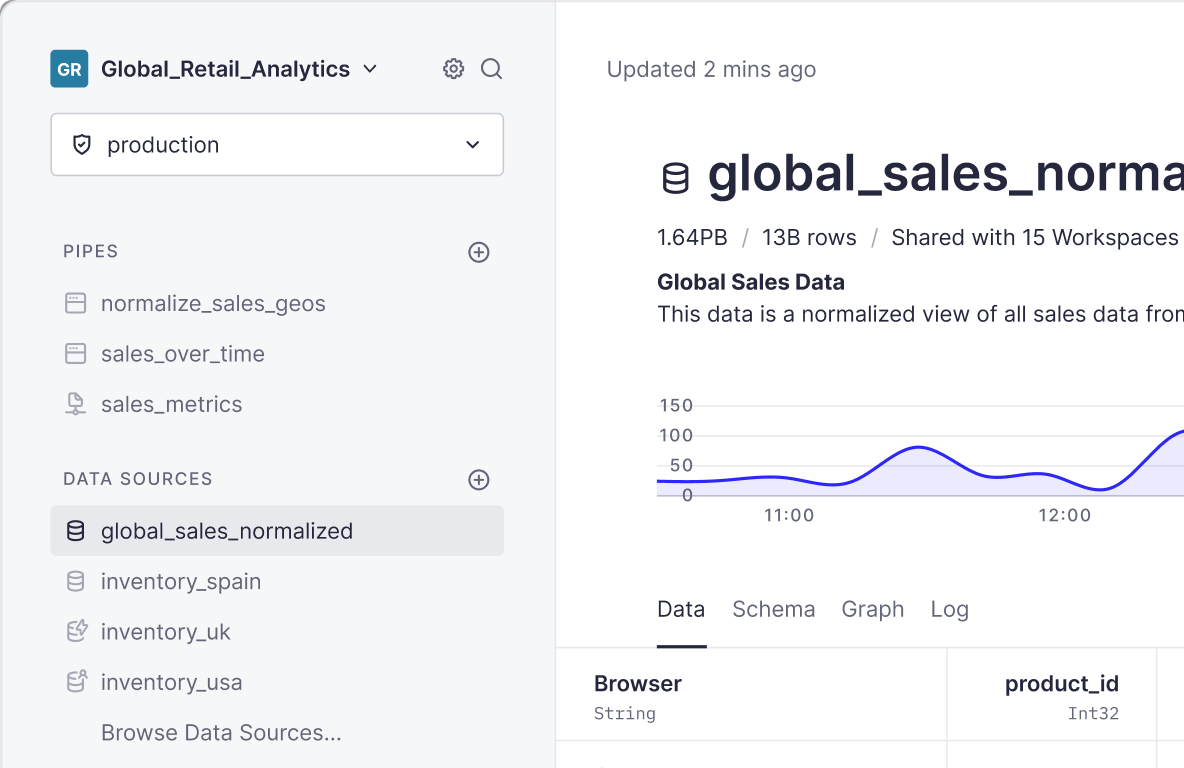

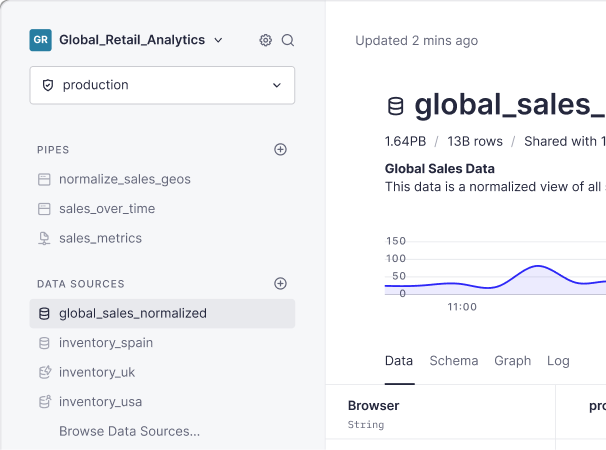

At Tinybird we want to make these kinds of updates seamless, so that you can replace data with full flexibility and without having to worry about replication, how the data is partitioned or anything of the sort.

At the same time, we want to ensure that any materialized view that feeds off the data you are replacing gets replaced seamlessly as well, without ever exposing inconsistent or partially replaced data.

That’s where our “Replace by condition” functionality comes in: we talked about the ability to “replace” entire data sources already in a previous post (you can also check mode=replace in our DataSources API documentation).

It is now possible to specify a condition under which only a part of the data is replaced during the ingestion process.

For instance, let’s say you have a Tinybird data source called events with an event_datetime column and that you want to re-ingest a CSV with the data for January 1st, 2020. In order to update the data you only need to pass the parameter “replace_condition” with the condition toDate(event_datetime) = ‘2020-01-01’

Your API call would look like this:

And what if you want to replace events of a certain event_type over the last 30 days?

In that case, your replace_condition could look like something like:

Isn’t that cool? Two great things to note about this:

- With

replace_condition, you don’t have to worry about how many partitions your data touches: we take care of that stuff while ensuring all downstream materialized views get regenerated seamlessly. - The replace condition ALSO guarantees that any data not matching the condition doesn't get ingested. That means that you can use a CSV file that represents a superset of what you want to replace to just replace some parts. E.g., use a csv that contains all the transactions for a certain period even if you just want to replace a few of them.

Does this sound familiar? Want to give it a try? Get in touch or join our waiting list